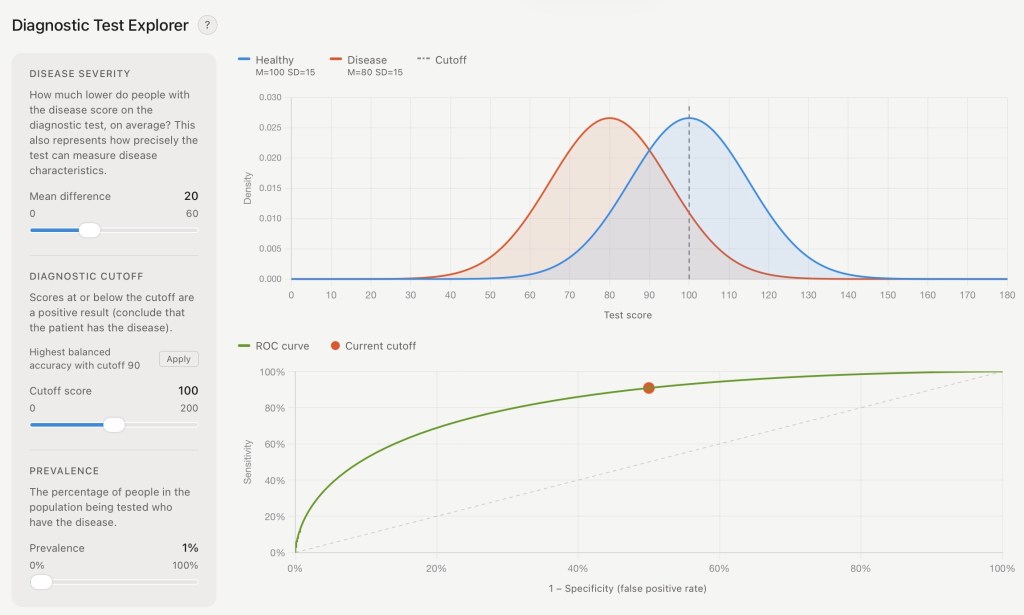

TLDR: I vibe coded a little app that lets you explore how various things affect the accuracy of a diagnostic test. You can try it out here: Diagnostic Test Explorer.

Your doctor gives you a test with 90% accuracy. “If someone has this disease, then there’s a 90% chance this red light will turn on,” she says. The light turns red and your face turns pale. Does this mean you probably have the disease? Are you a diseased person now? “Well … maybe,” your doctor says.

Your doctor is right to be uncertain. Good doctors are uncertain about diagnostic tests.

Why? Because “accuracy” can have different meaning for different outcomes and different scenarios. Above, we’re talking about sensitivity—in a group of people who definitely have the disease, how many are positive on the diagnostic test? We said it’s 90%, which sounds pretty good.

But consider a rare disease that only 1% of the population has. People who have the disease do worse on the diagnostic test most of the time (by about 1 standard deviation), but people who don’t have the disease sometimes do bad on the test too. Now you’re positive on this “90% accurate” test, so what are your chances of having the disease?

Something like 1.5%.

The group of people with the disease is almost always positive on the test, but it’s a small group, so you’re probably not in it. The healthy group is just bigger.

Most Tests Only Nudge Uncertainty

Your positive test told you something—your overall chance of having the disease was about 1% when you knew nothing, and it’s 1.5% now that you have additional test information. That’s still valuable—a nudge in the right direction. The overall effect on your risk is not intuitive, though, and you and your doctor still have a lot of uncertainty.

There could be ways to make the test more useful. It might seem like pumping up that sensitivity even higher would help, but for the scenario above, it’s actually more important to avoid introducing more false positives in healthy people, which could mean lower sensitivity. Figuring out what to do and how to interpret tests requires a lot of math, because like I said, “accuracy” isn’t just one thing; there are, like, 20 numbers that define accuracy for different people in different scenarios, and changing any one number also changes all the other numbers.

A Tool To Help Understand Medical Tests Better

That’s complicated, so I built a tool to explore how diagnostic testing accuracy works, without having to get any diseases. Try it out here: Diagnostic Test Explorer.

I wanted this to be easy to play with, so you can just mess with sliders and see what happens. That always helps me understand better at an intuitive level, and I couldn’t find any calculators out there that combine simple numbers with visuals. Don’t rely on this for medical advice, obviously.

I did this to help my own brain understand, because in my day job I’m trying to make brain tests that are accurate for all people at all times, and that’s hard to do and hard to explain. With this tool, you can show, for example, that boosting sensitivity by being more liberal with your threshold for what you consider “positive” comes at the cost of less specificity, which makes it harder to be confident in a positive result. Good doctors understand this, and know that the results of any single test can make you certain about some things but will likely leave you uncertain about other things. That’s why they have multiple techniques and big brains.

Have you heard about AI? That’s how I created this, through what kids call “vibe coding” with Claude. I gotta admit, it was kind of awe inspiring to just ask for an app and have a machine create it. I don’t like the idea of AI being used for slop that replaces creative pursuits by stealing art from centuries of hard work by real human artists. This coding use feels more like a “tool”—traditional software with extra intelligence—than the plagiarism machine that pirates my books and generates fake women to catfish your uncle. Coders might feel differently, and it’s still not ethically pure, and we contain multitudes. Hopefully we at least contain a better understanding of medical tests now.

Trust Your Uncertain Doctor

Good clinicians know that testing is complicated. Evaluating the accuracy of a test, the efficacy of a treatment, or the quality of a scientific paper requires complex mathematics, and more importantly, skeptical thinking. Accepting the result of a test can be comforting in its certainty, but reality is uncertain. In the journeys of medicine and life, we’ll never know anything without a doubt, but with each step we take, we can know a little more than we knew before.